California Startup Unveils Groundbreaking Optical Interconnect Technology to Revolutionize AI Computing

![]() 04/03 2025

04/03 2025

![]() 421

421

Produced by Zhinengzhixin

California-based startup Lightmatter has recently introduced two pioneering optical interconnect technologies, Passage L200 and Passage M1000, designed to tackle the burgeoning bandwidth constraints in multi-chip AI systems. By substituting traditional electrical signals with optical signals, these technologies achieve I/O bandwidths of up to 256 Tbps and 114 Tbps respectively, significantly enhancing AI computing performance and efficiency.

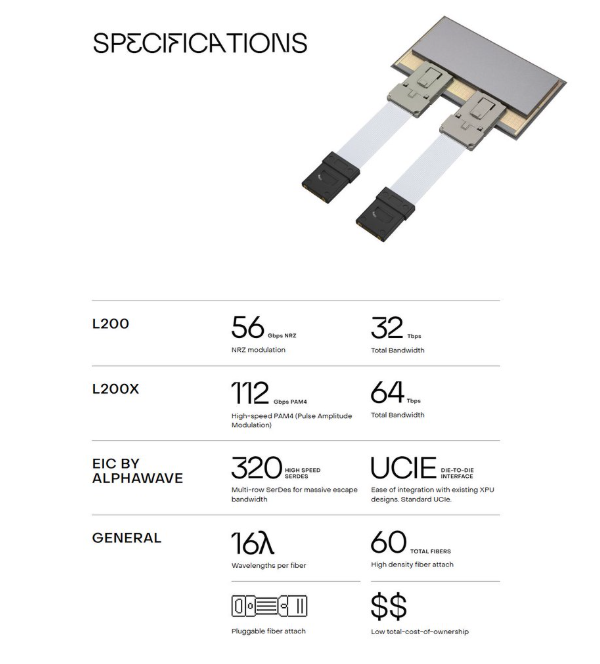

● Passage L200, the world's first 3D co-packaged optics (CPO) product, is available in 32 Tbps and 64 Tbps configurations, slashing AI model training time to one-eighth of previous durations.

● Passage M1000 is a photonic superchip reference platform supporting ultra-large-scale multi-chip AI processor designs.

At the close of last year, Lightmatter secured a $400 million Series D funding round, bringing its total funding to $850 million and valuing the company at $4.4 billion. Collaborating with industry titans such as GlobalFoundries, ASE, and Amkor, Lightmatter is poised to launch its products in 2025 and 2026.

Amidst soaring AI computing demands, Lightmatter's technology bolsters data center performance and introduces new paradigms for energy efficiency and scalability.

Part 1

Lightmatter's Optical Interconnect Technology:

A Technological Leap that Surpasses Traditional Boundaries

● Passage L200

Lightmatter's Passage L200 is the world's inaugural 3D co-packaged optics (CPO) product, tailored for the next generation of XPU (processor, accelerator, or GPU) and switch silicon. By integrating optical devices directly into the chip package, it eliminates the reliance on chip edge I/O, achieving a "no-edge I/O" design.

L200 is available in 32 Tbps and 64 Tbps versions, offering a total I/O bandwidth of up to 256 Tbps, marking a 5 to 10 times improvement over existing solutions.

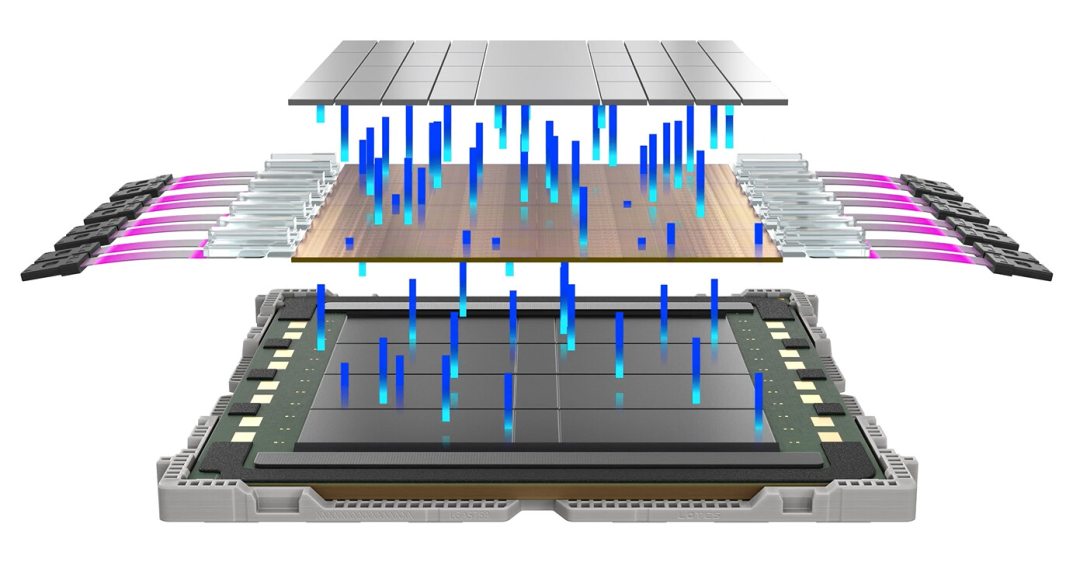

◎ Passage L200 adopts a 3D stacked architecture, placing Alphawave's I/O chips alongside customer XPU dies, enabling vertical communication through Lightmatter's Passage active optical interposer.

◎ The I/O and XPU chipsets are mounted on the Passage interposer using standard Chip-on-Wafer technology, facilitating seamless communication via the UCIe protocol without software modifications.

◎ Through-Silicon Via (TSV) technology transmits power from the underlying circuit board to the top XPU, ensuring efficient power supply.

Each 64 Tbps version comprises four I/O chips, providing 1.6 Tbps bandwidth per fiber (based on 112 Gbps per wavelength, 16 wavelengths), 18 times faster than current NVLink technology and twice as fast as traditional pluggable optics.

Passage L200's high bandwidth and low latency make it ideal for AI computing. For instance, its 64 Tbps version offers 256 Tbps I/O bandwidth per package, drastically reducing data transfer time and accelerating AI model training by up to 8 times compared to traditional technologies.

Moreover, optical transmission generates less heat than electrical signals, minimizing power consumption and cooling requirements.

Nick Harris, CEO of Lightmatter, stated: "L200 and L200X will be fully available in 2026. We are integrating with customer chips to revolutionize the hardware architecture of AI computing."

● Passage M1000

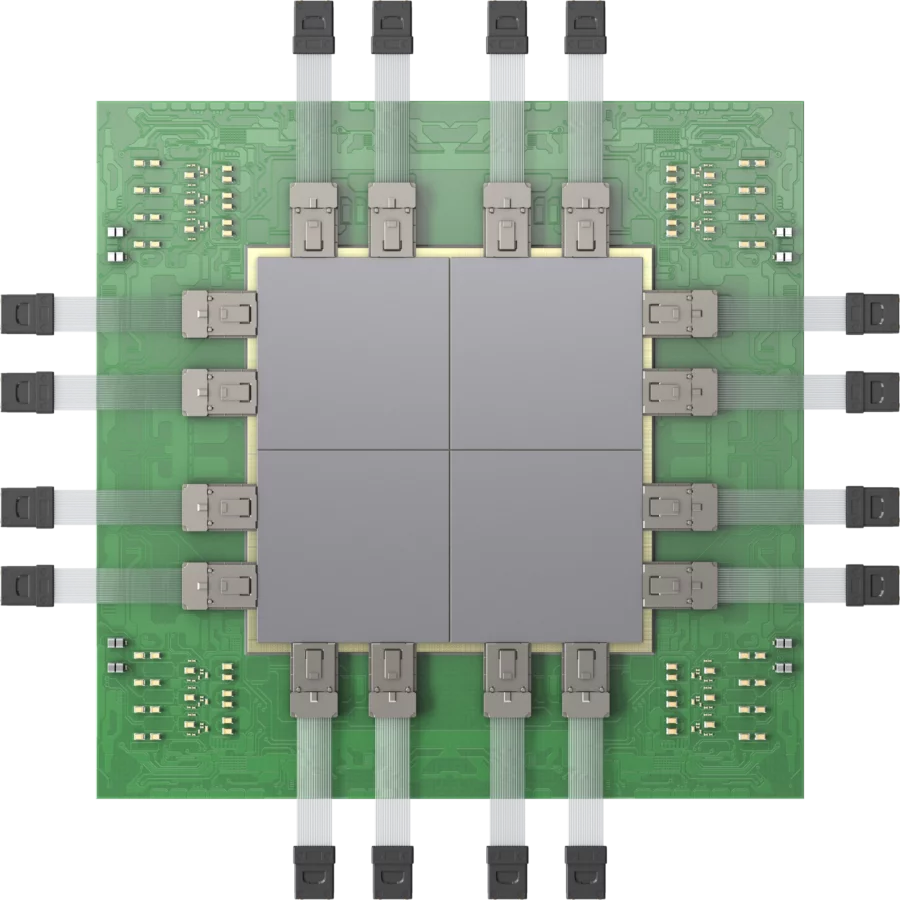

Passage M1000 is Lightmatter's photonic superchip reference platform, leveraging the same Passage active optical interposer technology to support ultra-high-performance multi-chip AI processors.

◎ The platform establishes a programmable waveguide network through solid-state optical switches, offering a total optical bandwidth of up to 114 Tbps and supporting 1.5 kW of power per package.

◎ Passage M1000 employs a multi-mask active photonic interposer spanning over 4000 square millimeters, making it the world's largest 3D packaged chip complex.

◎ It supports 256 fiber edge connections with a bandwidth of 448 Gbps per fiber, enabling connections to up to 2000 GPUs within an extended domain.

Harris emphasized: "M1000 is theoretically the smallest high-bandwidth package. Traditional CPO technologies struggle with bulkiness and heat dissipation due to external optical modules, while M1000 integrates optics within the chip, resolving these issues."

Passage M1000 is particularly suited for AI tasks demanding massive computational resources, such as training and inference for large language models (LLMs). By eliminating I/O edge limitations through optical interconnect, M1000 allows Serdes to be placed anywhere within the package, offering unprecedented flexibility and scalability. This makes it an ideal platform for constructing million-node AI computing clusters, scheduled for release in summer 2025.

● Lightmatter's optical interconnect technology presents substantial advantages over traditional electrical interconnect:

◎ Ultra-high Bandwidth: Optical signals transmit more data in a smaller physical space, offering bandwidths far exceeding traditional technologies in L200 and M1000.

◎ Low Latency: Light speed transmission reduces data latency, enhancing AI computing real-time performance.

◎ Low Energy Consumption: Optical signals have low heat dissipation, effectively reducing data center power consumption.

◎ High Scalability: Optical interconnect supports seamless expansion of computing resources, adapting to the rapid growth of AI demands.

Lightmatter's strategy of deeply integrating optical technology with computing units has fundamentally transformed the limitations of traditional chip interconnect.

Part 2

Market Impact and Lightmatter's Strategic Positioning

With the ascendancy of generative AI and large language models like GPT-4, AI computing demands are surging exponentially. Training these models necessitates thousands to tens of thousands of GPUs working in unison, pushing traditional electrical interconnect technology to its bandwidth and energy consumption limits.

For instance, NVIDIA's latest NVLink platform (NVL72) offers 7 Tbps inter-chip transfer speed but still falls short of future AI cluster needs.

Moreover, high latency and energy consumption issues in electrical interconnect further hinder computing cluster efficiency. Lightmatter's optical interconnect technology emerges as a game-changer, with products already boosting chip interconnect speeds to 30 Tbps and plans for 100 Tbps versions.

In contrast, Passage L200 and M1000 offer bandwidths of 256 Tbps and 114 Tbps respectively, significantly enhancing data transfer efficiency and reducing energy consumption through optical signals, providing a sustainable path for AI data centers.

By the end of 2024, Lightmatter completed a $400 million Series D funding round led by T. Rowe Price Associates, with follow-on investments from existing investors like GV (Google Ventures), bringing total funding to $850 million and valuing the company at $4.4 billion. This funding will accelerate product development and market launch.

Concurrently, Lightmatter collaborates with industry leaders such as GlobalFoundries, ASE, and Amkor, utilizing GlobalFoundries' Fotonix platform for silicon photonics chip production and ASE and Amkor for packaging, ensuring product quality and mass production capabilities.

Harris said, "We emulate TSMC's business model, not favoring any customer but providing a technology platform to aid their market expansion." This open collaboration strategy aids Lightmatter in establishing a wide-reaching influence within the AI computing ecosystem.

Lightmatter lowers the technology adoption barrier through the Idiom software platform. Idiom enables developers to run models based on PyTorch, TensorFlow, or ONNX directly on Lightmatter's photonic architecture without code modifications. This compatibility significantly bolsters market acceptance.

Furthermore, Idiom provides model optimization tools like compression and quantization, helping developers reduce model size while maintaining performance, catering to diverse AI application needs.

As AI computing demands continue to soar, optical interconnect technology is recognized as the key to solving data center bottlenecks. With its technological prowess and first-mover advantage in silicon photonics, Lightmatter has emerged as a leader in this domain.

However, competition is intensifying, with companies like Celestial AI exploring similar photonic computing technologies. Nonetheless, Lightmatter fortifies its market position through collaborations with industry giants and a comprehensive software and hardware ecosystem.

The launch of Passage M1000 (2025) and L200/L200X (2026) will further cement its leadership in the AI computing market.

Summary

Lightmatter's Passage L200 and M1000 represent a groundbreaking advancement in AI computing hardware. By leveraging optical signals for chip interconnect and computation, they shatter the bandwidth and energy consumption constraints of traditional electrical signals, providing efficient and scalable solutions for AI data centers.